Not All Norms Are Created Equal

Available in:

EN

Over the past decade, the demand for objective testing in performance has grown, improving standards and training outcomes through more informed assessment and decision-making. However, testing alone has limitations in its application.

Test results and performance data often feel arbitrary without a reference standard or benchmark to which they are compared. Normative data provides the necessary context to categorize an individual’s performance in comparison to their age- and sex-matched peers.

Normative data provides the necessary context to categorize an individual’s performance in comparison to their age- and sex-matched peers.

Defining Normative Data

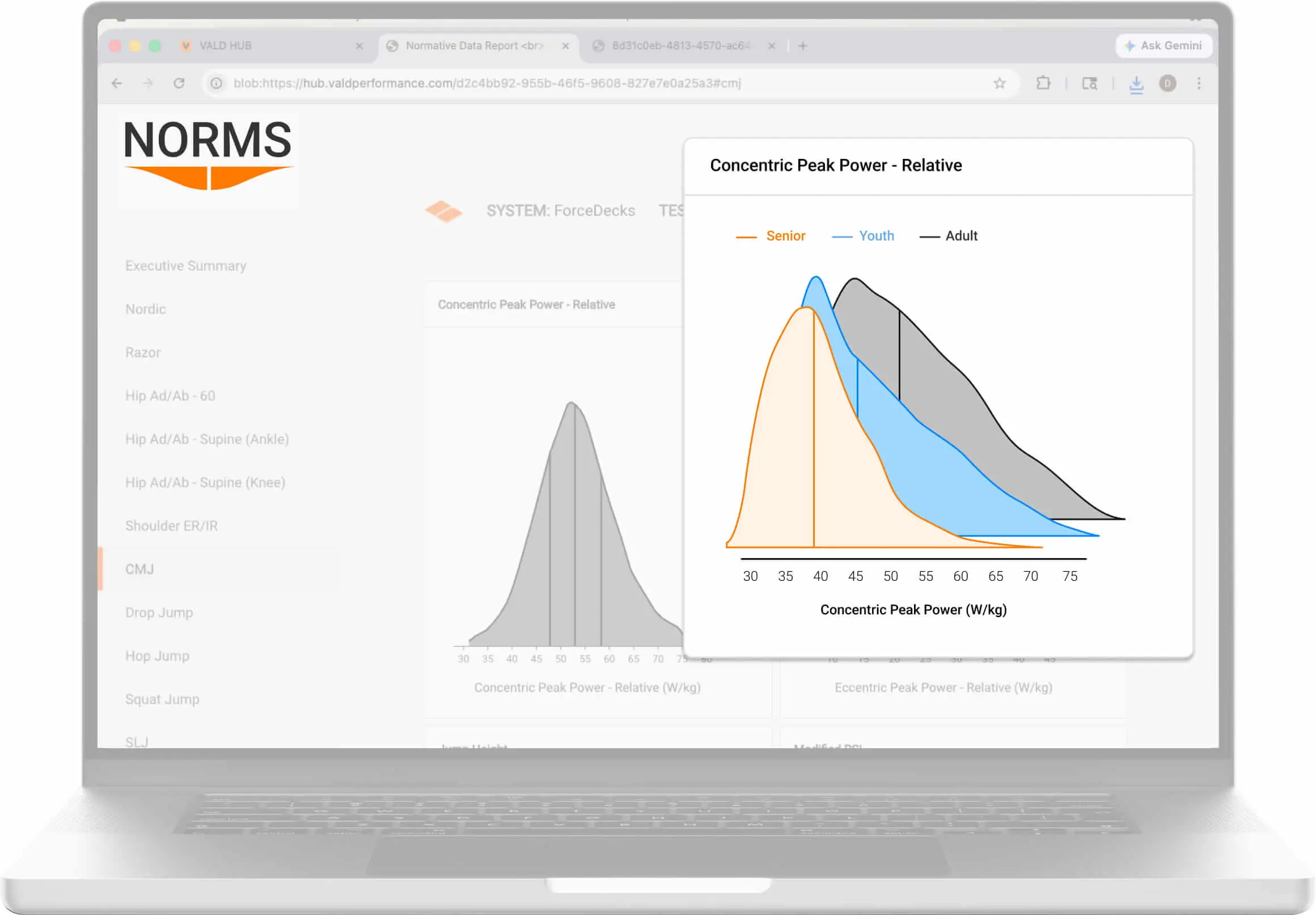

Normative data refers to reference values derived from a defined population that help practitioners understand how performance on a given test typically varies within that group. For example, normative data can be used to rank or categorize performance in tests such as a countermovement jump (CMJ), helping practitioners better understand performance status and sport-specific capacity in an athletic population.

These reference values are typically built from large datasets and are used to describe how people within a population perform relative to their peers. Individual performance data is most commonly ranked via percentiles (1st-100th), providing a practical frame of reference for interpreting whether a result is low, typical or high for the population in question.

Normative data density plots for CMJ concentric peak power displayed in VALD’s Normative Data Reports. For more information on normative data, refer to our What are Norms? Understanding normative data article.

Defining VALD Norms

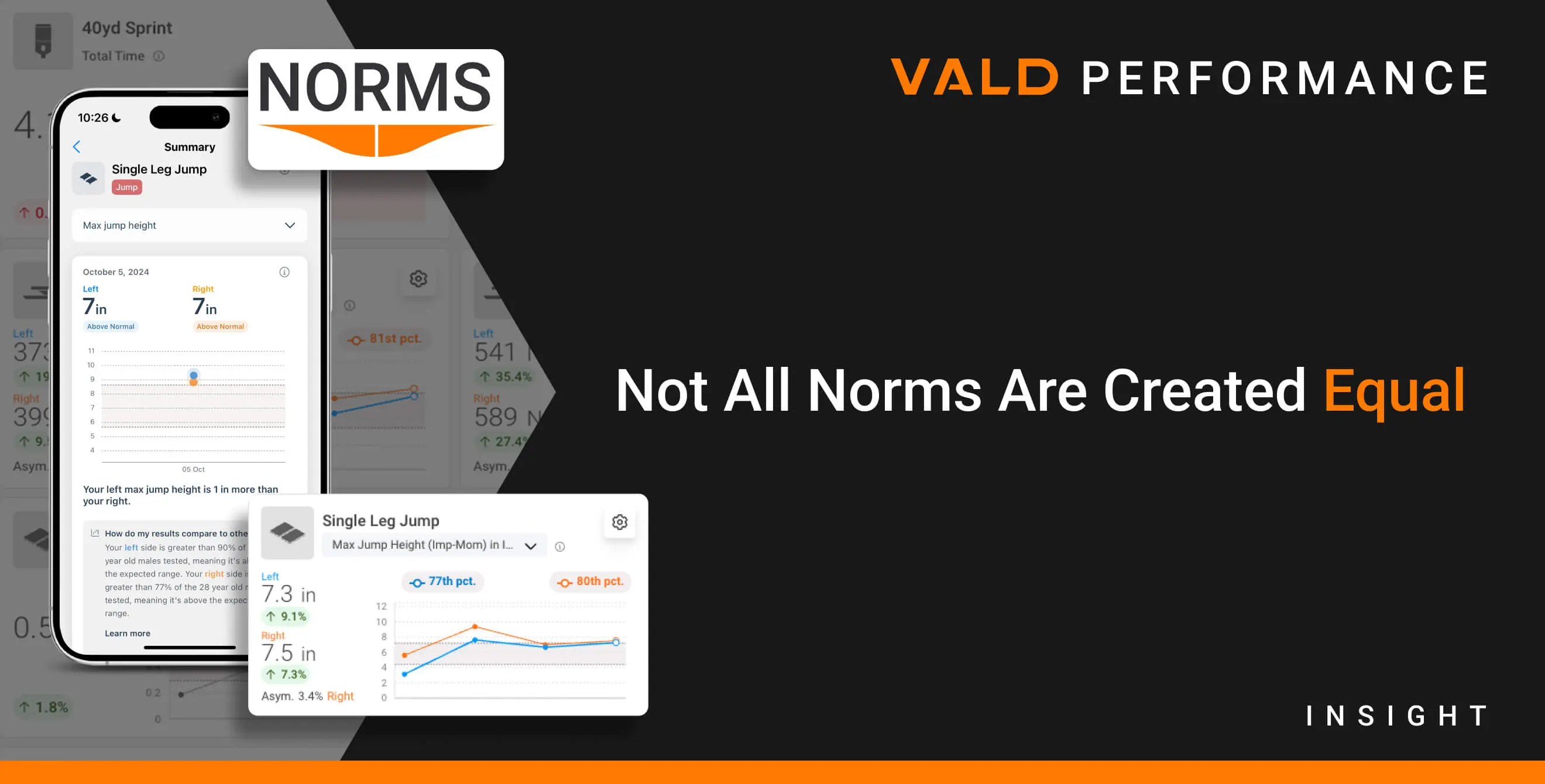

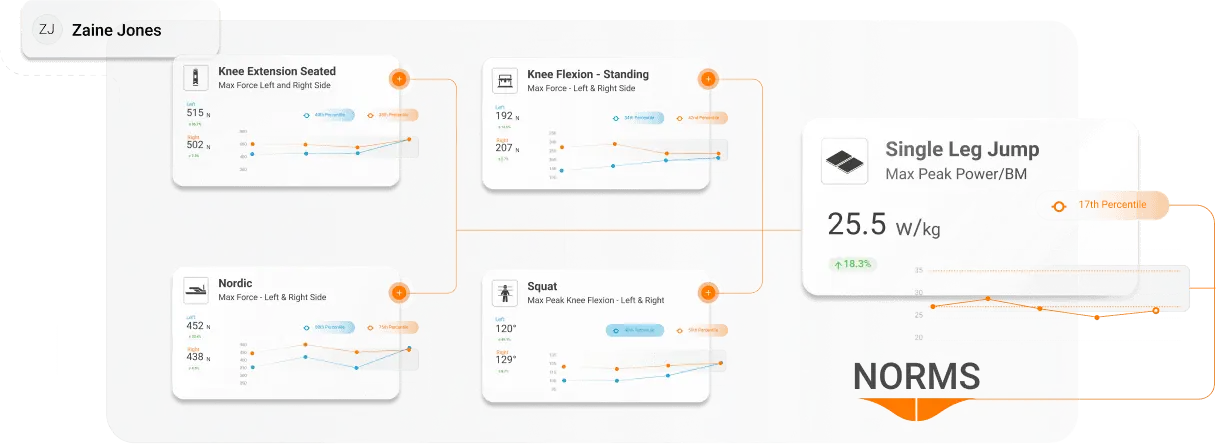

Within VALD, Norms (capital “N”) refer to normative data directly integrated into VALD systems. For example, practitioners can compare an individual’s strength, jump or movement data to age- and sex-matched reference values directly within VALD Hub or system-specific applications like ForceDecks or ForceFrame.

If you are new to VALD’s Norms, our Introducing VALD Norms article describes how they are integrated across VALD systems and applied in practice.

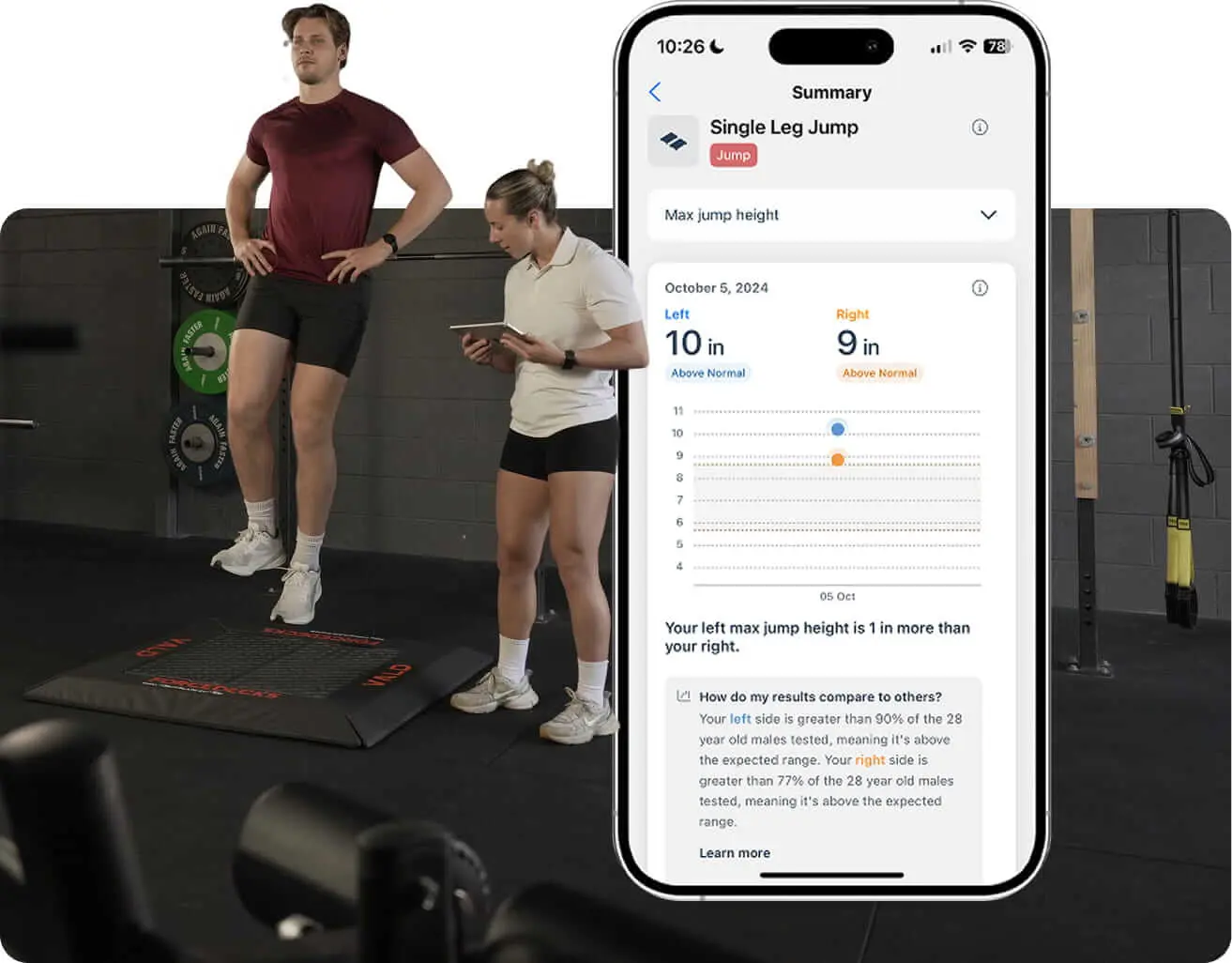

Norms are generated from cleaned, statistically analyzed datasets drawn from millions of test results. These reference values are available within VALD platforms such as VALD Hub and VALD mobile apps (e.g., the ForceDecks iOS app), providing practitioners with context to inform clinical and performance decision-making.

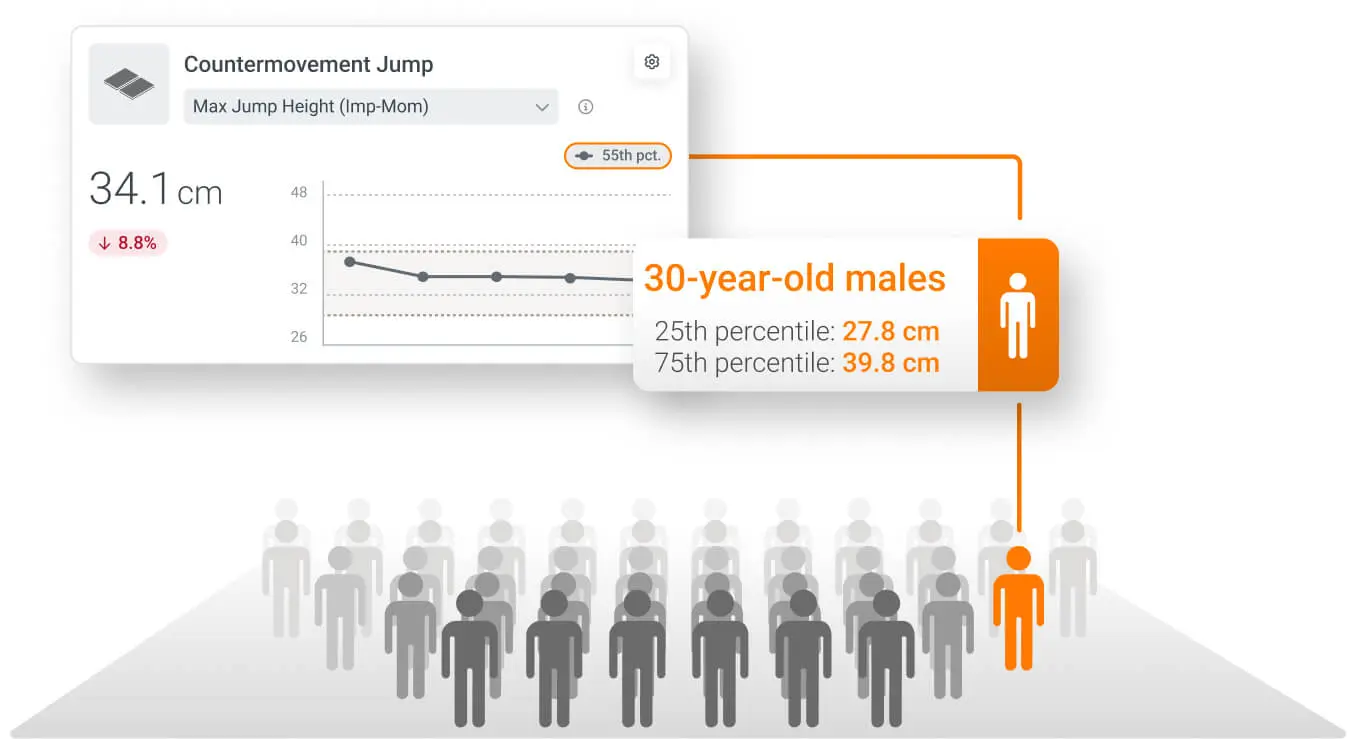

Norms are integrated in two formats: percentiles and descriptive ranges. Most commonly, they are presented numerically via percentiles, for example:

- A result in the 50th percentile represents the population median, or the typical “middle” result.

- A result in the 75th percentile indicates a metric is higher than 75% of the population.

Percentiles allow practitioners to quickly understand how a result compares to others with similar characteristics, while individuals using applications like MoveHealth or systems like HumanTrak can see Norms presented in descriptive ranges for ease of interpretation: below, within or above normal.

Percentiles allow practitioners to quickly understand how a result compares to others with similar characteristics…

Normative data comparisons in the MoveHealth app for both right and left sides, written in athlete-friendly language.

These values and insights support informed decision-making and provide individuals with clear context for interpreting progress relative to their peers.

Population-Specific Normative Data

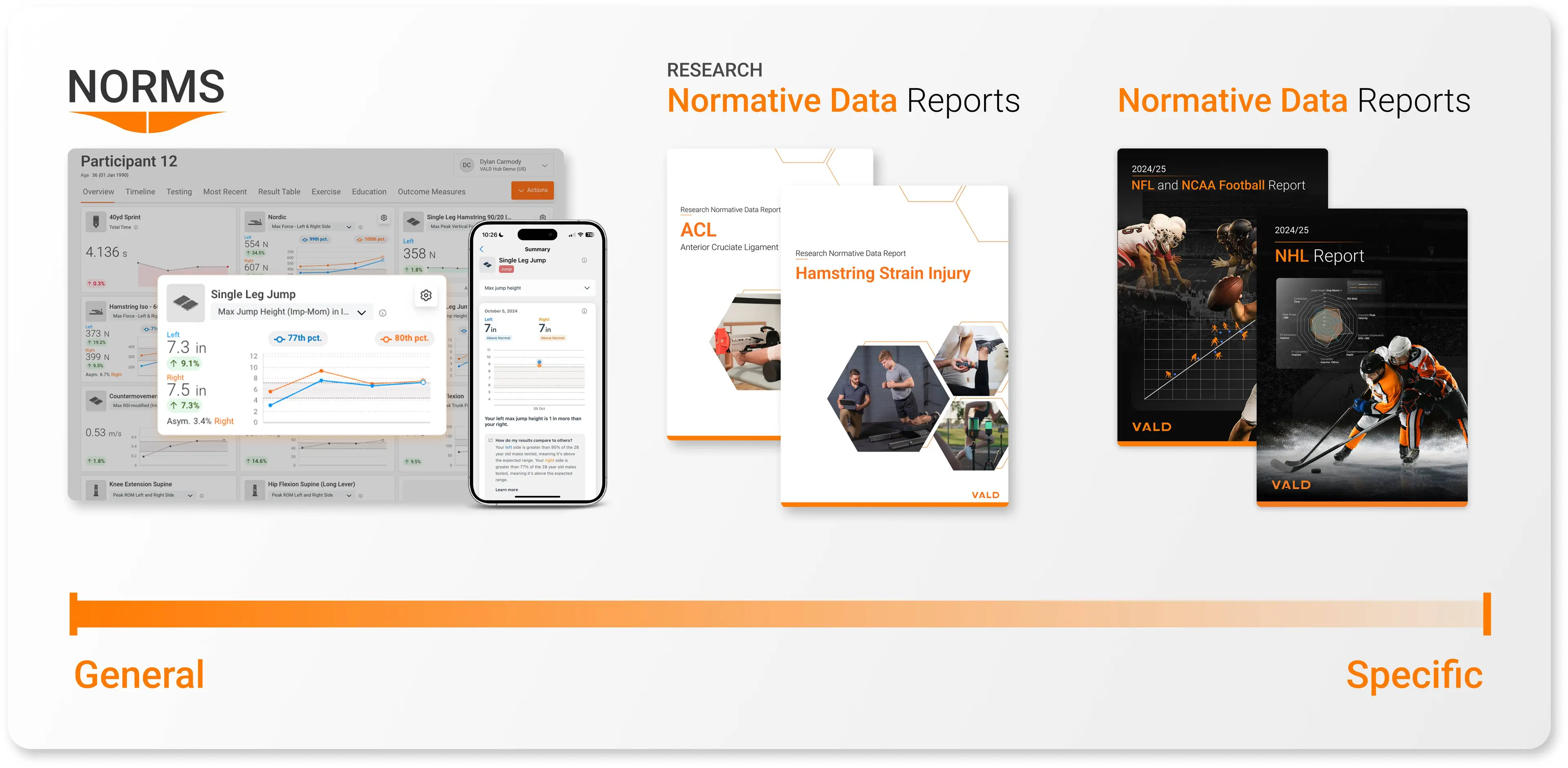

Alongside integrated datasets like Norms, practitioners can also use more specific normative data from published reports and research articles. These reports summarize performance trends within specific populations, often grouped by sport, position, level of competition or testing environment, and are designed to highlight how groups typically perform across common assessments.

…reports summarize performance trends within specific populations…and are designed to highlight how groups typically perform across common assessments.

For example, the 2024/25 NFL and NCAA Football Report, available in VALD Hub, describes the performance of National Football League (NFL) and Division I football athletes on common tests such as the CMJ, isometric mid-thigh pull (IMTP) and drop jump (DJ), among many others.

Similarly, VALD Hub has Research Normative Data Reports specific to pathologies like anterior cruciate ligament reconstruction (ACLR) and hamstring strain injury (HSI). These reports allow practitioners to reference research-based benchmarks throughout the rehabilitation process for both ACLR and HSI.

Norms and normative data vary in their level of specificity, which affects how they are best used in practice. As specificity increases, the reference point often becomes more relevant to the population being assessed.

Not All Normative Datasets Are the Same

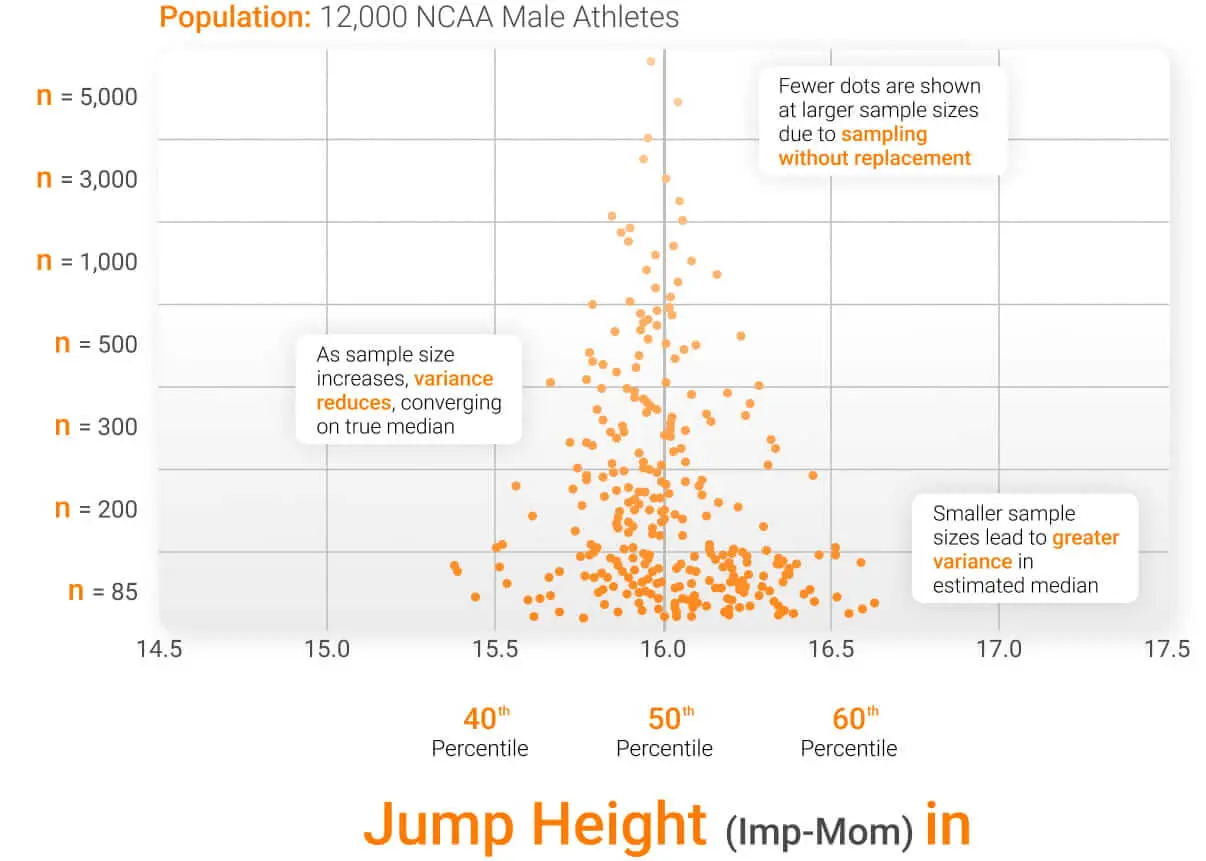

One of the most important factors influencing the reliability of normative data is its sample size. Small datasets (e.g., under 100 samples) often produce unstable estimates of population performance. With few observations, metric medians or percentile thresholds can shift dramatically as new data are added, making them unreliable reference values.

Small datasets (e.g., under 100 samples) often produce unstable estimates of population performance.

In the figure below, each orange dot represents the median jump height for a varying sample of the dataset. When the sample size is small (for example, 85 observations), the estimated median varies widely depending on which data points are selected, producing values between approximately 15.4 and 16.6 inches (in) (over 7% difference between values). As the sample size increases, the estimates become more consistent and begin to converge toward the true population median.

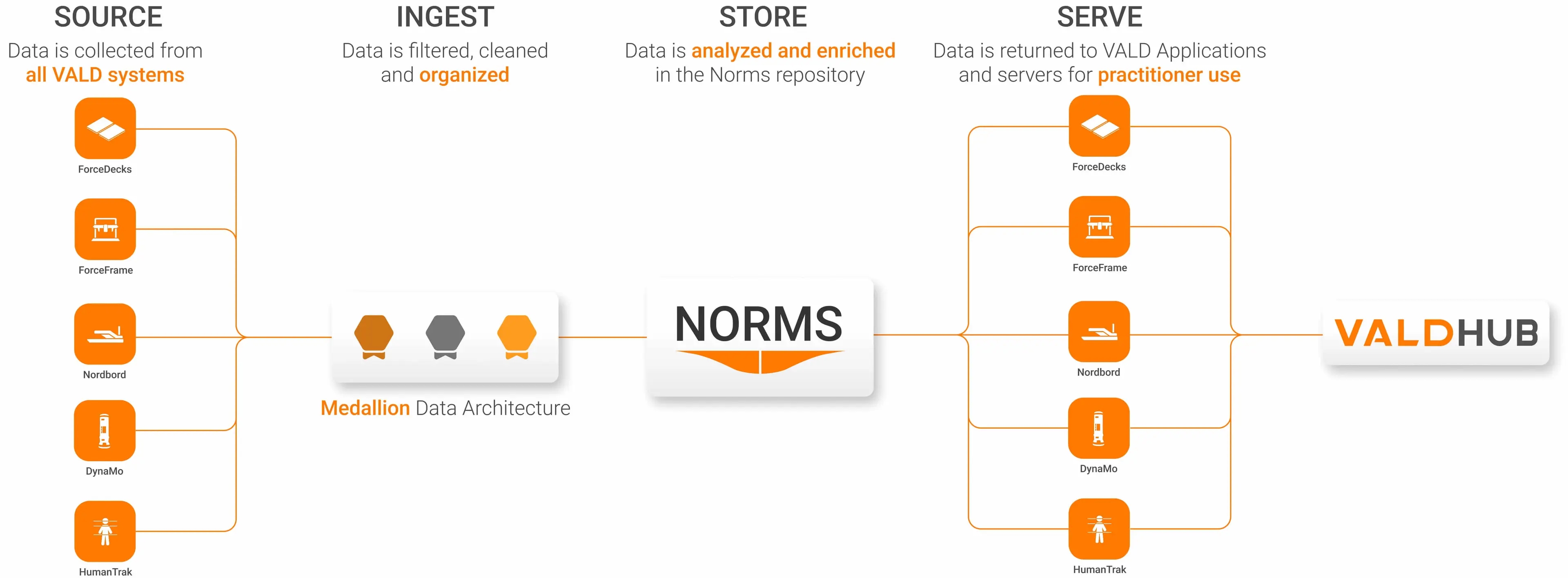

This highlights the need for large volumes of data to stabilize normative datasets such as Norms. VALD delivers this by calling upon the Data Lakehouse, which is based on over 100 million tests captured from millions of individuals across tens of thousands of organizations. In practice, normative datasets ideally contain:

- Hundreds to thousands of observations per subgroup

- Clear separation by age, sex and test type

- Consistent testing protocols across organizations

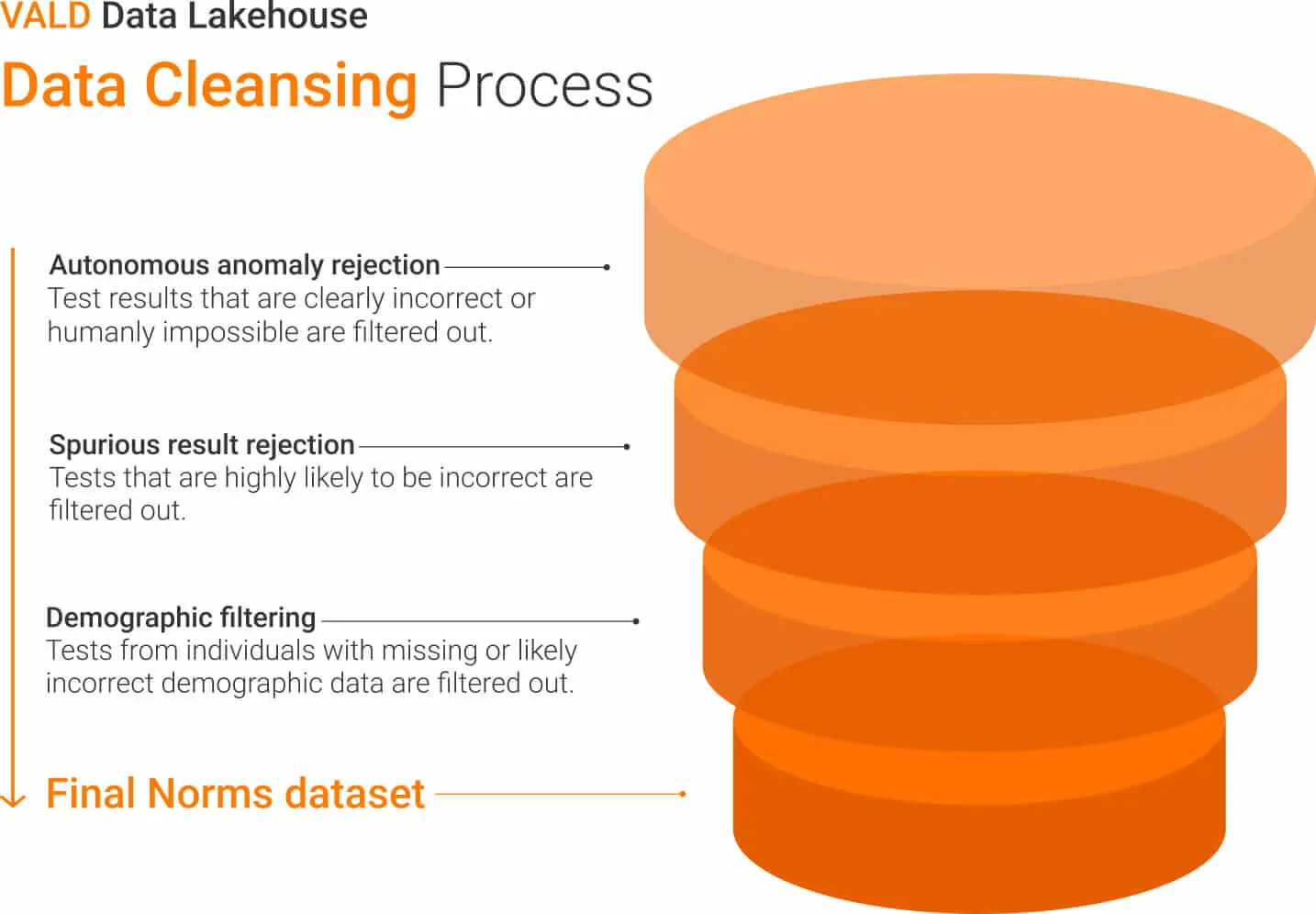

VALD’s data cleansing process controls for poor test execution and improper data through anomaly and spurious result rejection and demographic filtering.

However, dataset size alone does not guarantee quality. Normative datasets must also be carefully cleaned and validated before they can be used to generate reliable reference values. Inaccurate data can distort normative distributions and ultimately lead to misinformed decision-making.

Normative datasets must also be carefully cleaned and validated before they can be used to generate reliable reference values.

Before normative reference values are generated, datasets undergo multiple filtering and validation steps to ensure the data is accurate and representative, as outlined in the figure below.

How Normative Data Is Calculated

Once datasets have been cleaned and validated, statistical modeling techniques are used to generate the Norms we see and use in VALD Hub.

Most normative datasets produce percentile curves that describe how performance changes across age and sex groups.

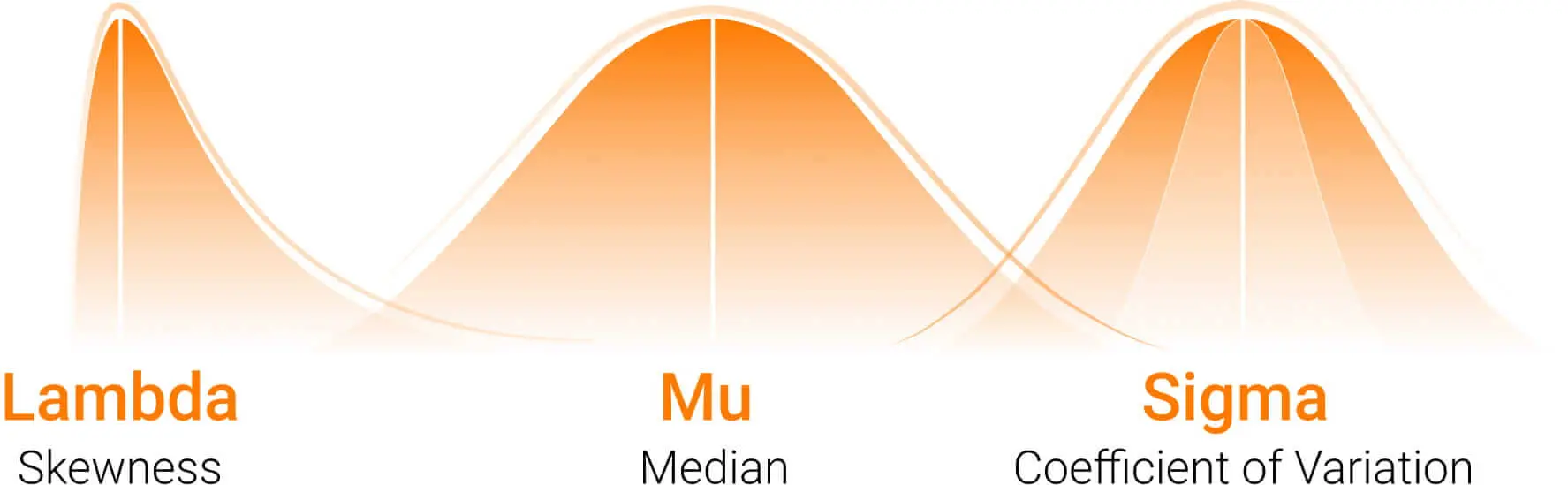

One commonly used method is the Lambda, Mu, Sigma (LMS) method, which has been widely applied in biological data to model age-related reference curves and generate smoothed percentile distributions across populations. The LMS approach models three parameters:

- Lambda (L): Distribution skewness (how symmetrical or asymmetrical the distribution curve appears)

- Mu (M): Population median (the point where half of the observations fall above and half of the observations fall below)

- Sigma (S): Coefficient of variation (the relative spread, describing how much variability exists around the median)

Together, these parameters allow percentile curves to be calculated across different ages and demographic groups for simple, effective comparison.

The Future of Normative Data

Advances in data science are expanding the capabilities of large-scale musculoskeletal datasets. As testing platforms continue to collect large volumes of data across diverse populations, normative datasets are becoming increasingly comprehensive.

VALD Hub tiles allow specific categorization and analysis of nearly all VALD test metrics.

VALD is continuously improving the delivery of high-quality normative data that accurately represent real-world populations. This includes bolstering population metadata, strengthening data quality checks and investigating additional statistical modeling techniques.

As datasets continue to grow, normative reference values will become increasingly precise and representative of real-world populations.

As datasets continue to grow, normative reference values will become increasingly precise and representative of real-world populations.

Key Takeaways

Normative data, including VALD’s Norms, plays an important role in turning objective measurements into usable data. By comparing individual results to population reference values, practitioners gain the context needed to interpret performance, identify potential limitations and guide decision-making.

However, the value of normative data depends on several key factors, including large and representative datasets, rigorous data cleaning and robust statistical modeling. When these elements are in place, normative data becomes a powerful tool for practitioners working across sport, health and performance settings.

To see how Norms can help contextualize testing and support clearer decision-making, explore VALD Hub or get in touch with our team.